I just can't imagine how ILP would be listening for changes to push over otherwise.

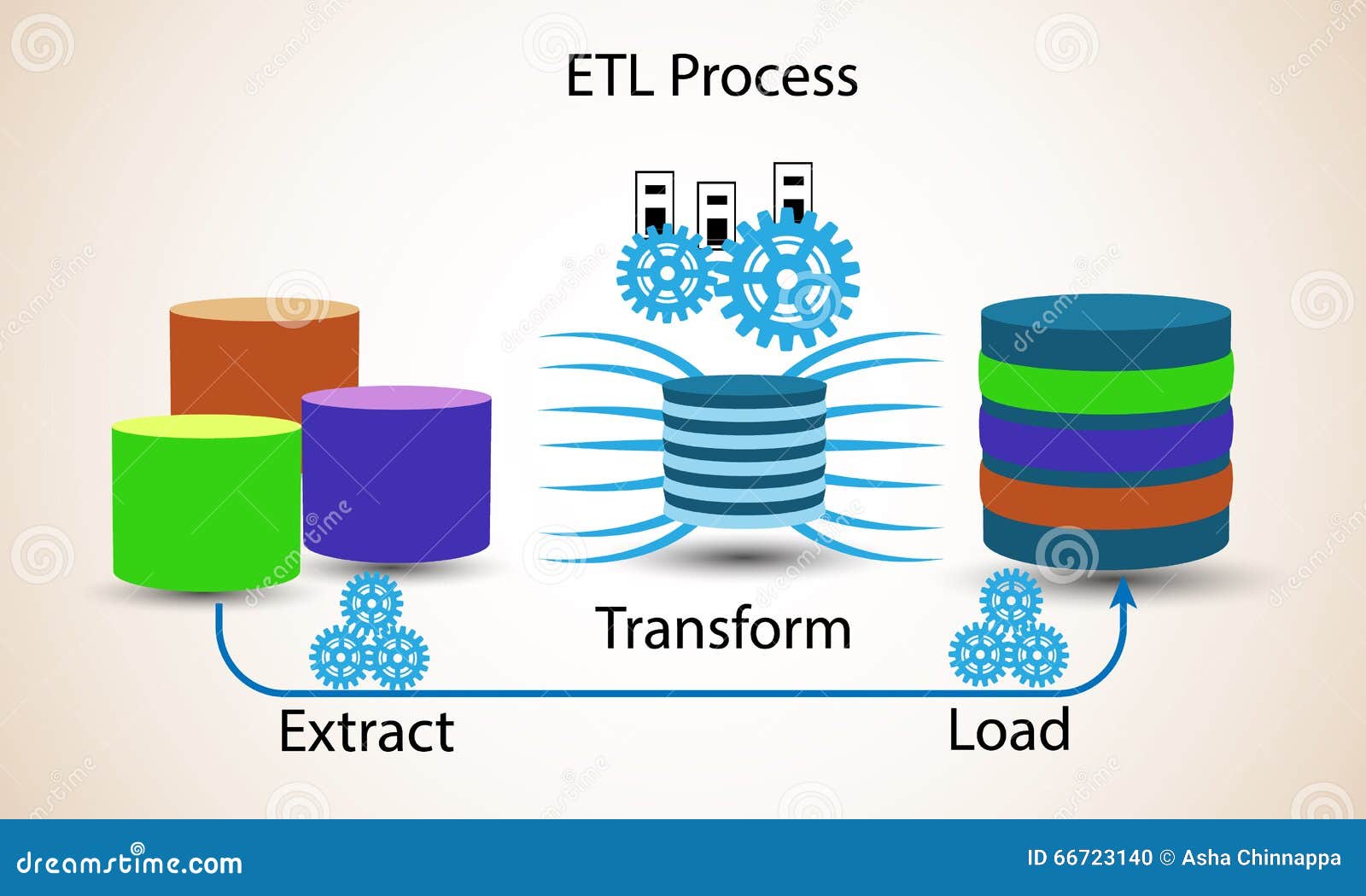

However, if ILP will push data real-time and handle a large percentage of our needs, we may implement it.įrom the posts I've read, it sounds as if we need to implement Publisher/Subscriber flavor of Ethos Integration, and not just the Proxy API. It seems to process the files on the half-hour, so rather than reinvent the wheel with the email summary we're currently leaning towards keeping the status quo. I've roughly implemented posting the CSV files to canvas, but I like how the SFTP upload sends us an email summary at the end of processing. We're currently using informer to generate CSV files which we then upload via SFTP to Canvas. Watch the brief video below to learn why the market is shifting.I am researching ILP v5 to see what the pros/cons are. See a side-by-side review of 10 key areas in the ETL vs ELT Comparison Matrix. As a result, building data warehouses with ETL tools can be time-consuming, cumbersome, and error-prone - introducing delays and unnecessary risk into BI projects that require the most up-to-date data, and the agility to react quickly to changing business demands. This is why this process is appropriate for small data sets which require complex transformations.īuilding and maintaining a data warehouse can require hundreds or thousands of ETL tool programs. The benefit is that analysis can take place immediately once the data is loaded. The entire data set must be transformed before loading, so transforming large data sets can take a lot of time up front. In the ETL process, transformation is performed in a staging area outside of the data warehouse and before loading it into the data warehouse. This is why this process is more appropriate for larger, structured and unstructured data sets and when timeliness is important. But if there is not sufficient processing power in the cloud solution, transformation can slow down the querying and analysis processes. This means that this process takes less time. In the ELT process, data transformation is performed on an as-needed basis within the target system. This can be a significant challenge for the traditional ETL pipeline and on premises data warehouses. Today, your business has to process many types of data and a massive volume of data. The ETL process is more appropriate for small data sets which require complex transformations. The ELT process is most appropriate for larger, nonrelational, and unstructured data sets and when timeliness is important.

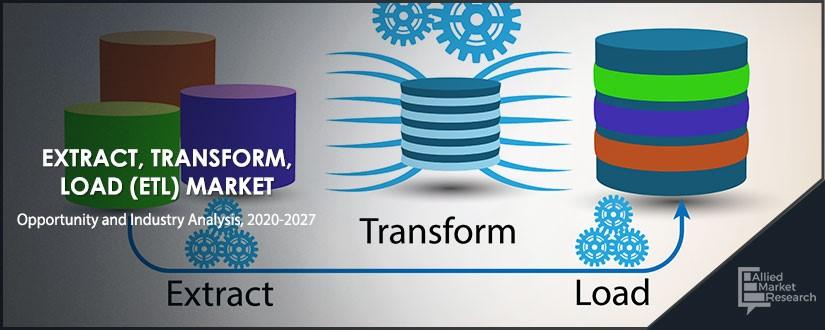

The main difference between the two processes is how, when and where data transformation occurs. Examples of transformations include data mapping, replacing codes with values and applying concatenations or calculations. Data transformation refers to converting the structure or format of a data set to match that of the target system. The second step involves placing the data into the target system, typically a cloud data warehouse, where it is ready to be analyzed by BI tools or data analytics tools.

This extracted data is often stored temporarily in a staging area in a database to confirm data integrity and to apply any necessary business rules. A data extraction tool pulls data from a source or sources such as SQL or NoSQL databases, cloud platforms or XML files. The ELT process is broken out as follows:Įxtract. ELT and cloud-based repositories are more scalable, more flexible, and allow you to move faster. ELT and cloud-based data warehouses and data lakes are the modern alternative to the traditional ETL pipeline and on-premises hardware approach to data integration.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed